Hi everyone! I know it has been a long long time I have written down on my blog! Got swayed in so many directions and commitment since I got promoted to Associate Professor rank (and also got tenured on my way). I am also pleading guilty on being more invested in social media, in particular the blue bird app. Nevertheless I am back, with one of my favorite science-communication outreach: Aluminum adjuvants and vaccines!

Physicians for Informed Consent or Plumbers for Water is Wet

Physicians for Informed Consent (PIC) is a known group of anti-science folks, draping themselves as “speaking truth about medicine” that is standing more like Mos Eisley cantina than a reputable source of information. Look at their members board and you will recognize infamous names that made their fortune on spewing anti-vaccines tropes, if not outright violating their Oath.

Last week, they decided to push for their latest leaflets on Twitter, allegedly to “inform” patients about the dangers of vaccines as “Vaccines Risk Statements”. One of them was that one on aluminum adjuvants.

At first, things look good. The design is slick, the references are here and seems coming from reasonable sources. But as usual, the devil is in the details and how their wordings are made to be deceptive. I will breakdown the rebuttal for each bullet points they have.

Back to Basics: Pharmacokinetics 101

Before we talk about what is wrong with this pamphlet, it is important that we understand the pharmacokinetics of aluminum. Beware! It isn’t very easy, and for the last 30 years (considering the work of Priest ND as seminal in that topic), many models have been developed to help understand the pharmacokinetics of aluminum.

In pharmacokinetics, we are working through the acronym of ADME: Absorption, Distribution, Metabolism, and Elimination. There are no exceptions unless you are administering a drug via intravenous route (in which the A is skipped).

Any routes occurring OUTSIDE the venous system have to deal with absorption. Three factors will determine the extent (how much) and rate (how fast) a drug will be absorbed: its own chemical structure, its formulation (how it was packaged to be administered), and of course the environment in which the drug is getting absorbed. These are usually encompassed in a term called “bioavailability.”. The bioavailability (F) is basically telling us how much drug ends up circulating in the body versus how much was administered. F is usually displayed as a number varying from 0 to 1, with 1 being the maximum (1=100% of the drug is bioavailable). By convention, a drug given via intravenous (IV) route achieves F=1 (100% bioavailable), while extravascular routes rarely achieve such value. It can be very close, but it can be very far.

Overall, we assume all extravascular routes follow the same fate and issues, with a slight exception for the oral (ingestion or per os) in which the presence of first-pass metabolism by the liver can extract a significant amount of a drug (if this drug is well-known to be highly metabolized).

Upon absorption, the drug circulates in the bloodstream (systemic circulation) and is considered as distributed in the “central compartment”. From that central compartment, the drug can be distributed in peripheral tissues (e.g. bones, heart, muscles, fat tissue, brain, spleen…) and will diffuse in their tissues until a certain and active equilibrium between the central and peripheral compartments is reached. Because the drug is found in the central compartment, it will not avoid passing through the liver and kidneys. These are important as the liver can metabolize the drug (and render it inactive), while both the liver and kidneys extract and eliminate the drug from the body. This is very important, as virtually every drug will be eliminated from the body in some form (metabolized or non-metabolized) and at some point (some will be quickly eliminated, and some will take a long, long time to be eliminated. We calculate that time using the concept of half-life (t1/2): the time to eliminate 50% of a drug from the body).

Pharmacokinetics of aluminum: where are we standing in 2023?

As of today, the pharmacokinetics of aluminum has proven one thing: it is very complicated, and it is getting better as our pharmacokinetic models are getting better in understanding what’s happens to our body.

I will cite the most recent paper from Karin Weisser, that PIC obviously omitted to cite despite being published way before their publication (https://pubmed.ncbi.nlm.nih.gov/34390355/).

Aluminum adjuvants come in the forms of salts, mostly as its hydroxide (AlOH3) and phosphate (AlPO4). As any salts, when added to water-containing (aqueous) solution, they will dissolve into ions, for that cases ultimately forming Al3+ ions. This ion is the one relevant to us, because it will share similarities with other ions (like iron aka Fe2+). Ions like to find binding partners that are opposite charges to them and in our body they will have two major partners: citrate and transferrin (Tf).

These aluminum salts have another feature: they are slow dissolving in our body (because our body pH is also about the pH in which their dissolution is the lowest) , it takes a long time to be completely dissolved. Citing the references #23 and #25 of their pamphlet, we estimate that if I was injecting 100microg of AlOH3 in animals, between 0.4microg (#25) and 0.6microg (#23) will have reached the circulation by 24 hours.

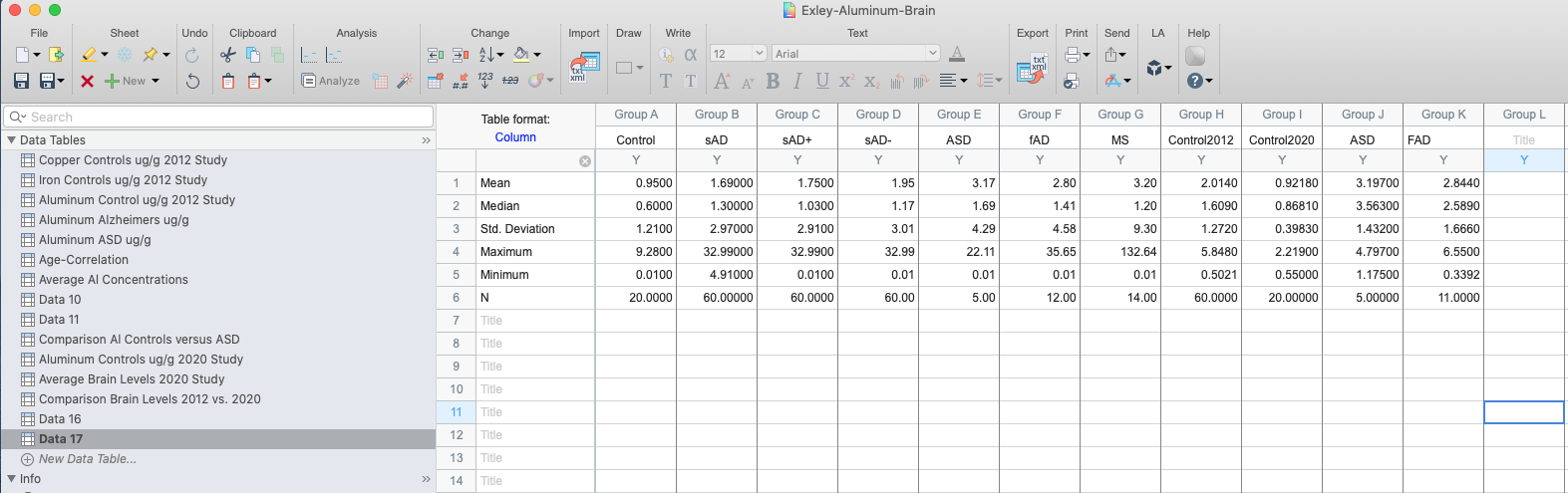

Aluminum data from #25: V1 denotes AlOH3 injected into rats, V3 denotes AlPO4 and V2 a mixture of both AlOH3 and AlPO4.

To keep it simple, we will use the average of these two and estimate it as 0.5microg/day entering the bloodstream or 0.5%/day. This means that ultimately this whole amount (100microg) will be 100% absorbed from the site, it just requires a long long time (we can estimate it about 200 days for a complete absorption). However, for aluminum-based adjuvants, this is not a problem. Aluminum salts, as crystal solids, are chemically inert. The species of concern is its dissolved form, the Al3+ form.

The next important point is how much is a concern? Aluminum is the 3rd element on Earth’s crust and since our unicellular ancestor LUCA came in, it had to deal with this aluminum around. We eat, we drink and we breathe aluminum even before we are coming out of this world. A study by Singh (Singh K et al., Int J Clin Biochem 2021) detected Al3+ in the umbilical cord of healthy newborns and estimated it about 14.4microg/L (we don’t have a consensus on “healthy levels” of aluminum but usually lab references list it as <10-16microg/L).

As I have mentioned, vaccines are mostly injected via intramuscular route (IM) with exception of live-attenuated vaccines (which are injected sub-cutaneous). The muscle tissue have two major features: it is a fairly dense tissue (packed with muscle cells), and one of the least perfused organ (poor vessel density). These are important because the chance we hit a blood vessel is close to zero, and also aluminum dissolution will be very slow (thanks to a low extracellular water environment, and slow blood flow).

This was demonstrated by Weisser and colleagues in Reference #25, in which the amount of aluminum detected in plasma of rats after IM administration was no different versus the saline control (vehicle).

This is how aluminum is behaving in our body, and which organs it will distribute. For simplicity, we will replace “gut” by “muscle” for discussing the intramuscular route. The amount of Al3+ absorbed from the injection site will be about 0.5% of the injected dose per day.

You will also notice that the elimination of Al3+ to be eliminated mostly via kidney (renal) route in the form of urine. In humans, 90% of Al-citrate (given by IV route) is eliminated via the kidney (uri, in brown) route, with the 10% remaining being eliminated up to 150 weeks.

Figure 7 from Weisser and colleagues studies (Hethey C et al., Arch Toxicol 2021)

What really matters here is that:

- We are being exposed to aluminum before we are born, and our main exposure of aluminum is from environment due to its large presence.

- Aluminum adjuvants are marginally adding a burden to baby body, as their absorption is slowly entering the bloodstream in a minute amount that appears not to change the plasma concentration.

- Aluminum entering the bloodstream is mostly eliminated (90%) by 24 hours, mostly by kidney (pee-pee) route.

As long as there is no accumulation (due to massive amount injected IV) or serious kidney malfunction (kidney diseases), aluminum from adjuvants does not seem to be a problem.

Let’s PICkle the claims – Claim #6

The first problem is found with Claim #6. The authors here use the classical anti-vaccine trope “injection versus ingestion”. As we have seen, extravascular routes are facing the same challenges, and therefore not that much different with some exceptions. Aluminum is not exception. Figure 2a is factually correct, as the existing literature estimate it between 0.1-0.3% per meal intake (1-3microg absorbed out of 1000microg). The problem starts with 2b. The number advanced here is not documented and does not match the limit set by the FDA when it comes to the maximum amount of aluminum in IV bags (I emphasize the word IV, that means IV bags being given as perfusion directly into a patient vein) is set at 5microg/kg/day (https://www.fda.gov/media/163799/download?attachment). The second issue is that the authors blantantly mixed apples with oranges and call it a day. Sure, the amount absorbed will be 100% at some point, but it is only a tiny fraction of the injected dose that is absorbed (0.5%/day). As you can see, albeit it is higher than oral route, the IM route remains way below the IV route. To achieve a 5microg/kg/day coming from a vaccine, we would need to deliver 1000microg in a baby weighing 1kg (which puts the baby into a severe premature baby, and premature babies are not following the CDC schedule until they are discharged). For a healthy newborn baby of 3kgs, that would be 3000microg aluminum in a single shot. No vaccines contains such amount.

Let’s PICkle the claims – Claim #7

Again, here we have a kernel of truth wrapped around by a thick layer of intended misinformation. This table again does not match the FDA limit by putting it down to 20% of the maximum limit we have documented. Moreover, the authors here do not do the calculation of how much aluminum for a vaccine leaks from the injection site into the bloodstream. Let’s do the math, with an average bioavailability of 0.5%/day (see above). Assuming we injected today 850microg of aluminum into a newborn, the extra burden of aluminum that day will be:

850*0.5% = 425/100 = 4.25microg for a 3.3kg baby or 1.28microg/kg/day. Again, this is absolutely a worst-case scenario, and we are slightly exceeding (the real-life scenario is 250microg from HepB vaccine, which would lead to 1.25microg/day burden).

Again, PIC is not only showing signs of lacking fundamental knowledge in pharmacokinetics but also leading a campaign of misinformation by inducing fears with fudged numbers.

Let’s PICkle the claims – Claim #8

This one is a bit more complicated as they are misleading the audience with a study led by Mitkus and colleagues (Ref #20) and citing their own non-reviewed study as well as Neil Z Miller, a journalist known…to have received his information on vaccines from out of space!

But let’s avoid the ad hominem and focus on the Mitkus paper. Mitkus data focused on a study done on rats that were fed aluminum-containing food while being pregnant, and keeping them fed after birth after. The amount was considered as NOAEL (No Adverse Events Levels) with both the mother and the litter showing signs of aluminum toxicity. Using this data, and PK models, Mitkus build up an estimation of aluminum burden equivalent for humans, and concluded the following:

The amount of aluminum coming from vaccines, on top of the daily burden from food and drink (in the form of milk, breast or formula) was not exceeding the maximum residue limit (MRL) set by the FDA for infants within the median weight (50th percentile) or the 5th percentile. At that time (2011), this was the best amount of data we had, and was further confirmed by clinical observation done by Movsas in preterm infants receiving their vaccine 24 hours before discharge (JAMA Ped 2013), or any correlation between blood/hair aluminum levels and vaccination status (Karwowksi et al., Acad Ped 2018). Writing a pamphlet in 2023 and omitting all the literature that confirmed the initial estimation of Mitkus done in 2011 is not anymore poor bibliographical skills. It is intented to occult the literature published from 2011 and 2023 with the sole goal to deceive. In science, you have to cite the WHOLE literature published by the time of publication, regardless that such literature aligns to your findings or not.

Let’s PICkle the claims – Claim #9

In this last claim, the authors again strike with deceptive graph, showing syringes (to induce the inherent fear of needles that everyone fear), and amount of aluminum from different vaccines. At first glance, the numbers are correct, but the devil is in the details. The authors conclude that babies get 1225microg of aluminum by 6 months of age. But they omit one thing: This amount is not given at once, it is given at various amount over 6 months and this is very important. As we have seen, what really matters is the amount entering the bloodstream and that the patient have functional kidney. A newborn has mostly functional kidney with a kidney function similar to an adhult by 2 months of age. Here is the CDC recommended schedules, following guidelines set by two major medical associations, the AAFP (Family Medicine) and the AAP (Pediatrics).

We can see that aside from the HepB, we have 2-month interval between the doses, which not only matches the recommended schedule for inducing proper immunity (immune cells memory) but also gives time for aluminum to be eliminated at a good amount (~30% elimination at injection site) to avoid an accumulation of the aluminum burden.

Again, the authors are stoking fears and overexaggerating the claims to lie to their readership. Third claim, third strike as fake information.

Conclusions

To conclude, I would like to emphasize their disclaimer note:

PIC knows they are exaggerating the claims, they know they are misleading and they are covering their asses legally with this disclaimer. They know their claims are bull, such as they recommend to seek out medical advice elsewhere.

I called them out on Twitter and their only response after a laconic “we have this information posted on our pamphlet” (you are witnesses they were not), they blocked me within 24 hours.

PIC is not only an ePIC failure when it comes to pharmacokinetics, but they are also ePIC grifters that have personal gains on misleading patients. When it comes to their communication, behave like facing a PIrCipne: avoid interactions at all costs.